Building a Fact-Grounded Investment Banking Pitchbook System with Google Antigravity

From our discussions with customers, pitchbook creation remains one of the most manual parts of the investment banking workflow. Even though the output is highly standardized, typically a 15-17 slide deck, teams still spend significant time building pitchbooks by hand.

Customers commonly cite 40-60 analyst hours per pitchbook, with much of that effort going to non-analytical work: data extraction, formatting, chart production, and brand alignment. Although many banks have invested in AI, customers tell us these implementations often prioritize surface-level efficiency, refreshing data, applying templates, and populating standardized slides, while the most valuable components (deal thesis, positioning narrative, and client-specific framing) remain dependent on human judgment.

This suggests the bottleneck is not purely automation. It’s a design problem.

And like any design problem, the solution starts with the right designer: someone who deeply understands pitchbook craft, how bankers think, how narratives are built, what good looks like, and why those standard shifts by client, sector, and situation. That domain-first design becomes the blueprint for what AI can reliably accelerate, what must remain human-led, and how the workflow should function end-to-end.

Which leads to the real shift: we didn’t start by prompting models, we started by designing a system.

From Prompting Models to Designing Systems

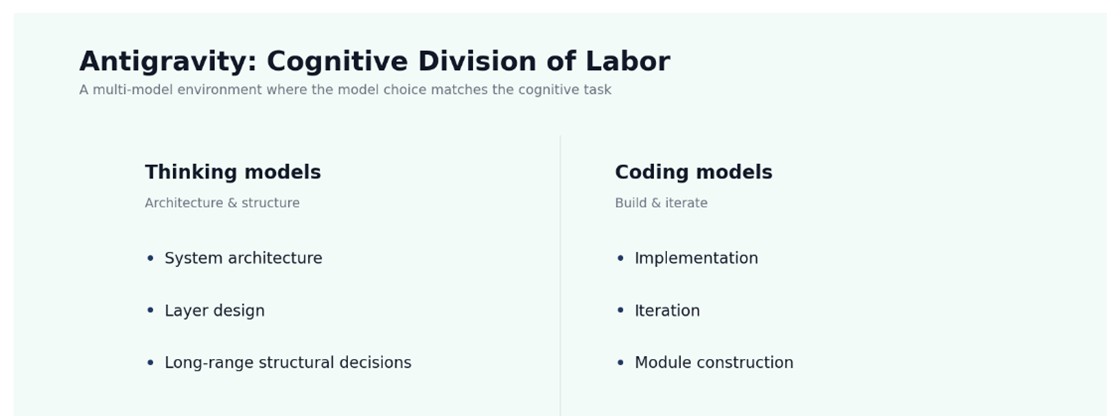

Most enterprise AI initiatives begin with familiar concerns: model selection, prompt engineering, and output quality. Our effort didn’t.

If the bottleneck is design, the solution can’t start with prompts. It has to start with the conditions under which the output can be trusted and a domain informed approach to encoding those conditions into the workflow itself.

We asked:

• What must be structurally true for an output to be trusted?

• What guarantees must the architecture provide?

• Is the constraint the model - or the workflow?

• How do we stay flexible and company-agnostic without sacrificing rigor?

Not humans and AI in parallel, humans and AI orchestrated, with accountability built in.[SM1] [SM2]

And once you view it that way, the next step becomes obvious: define the domain principles before writing any code.

Domain-First Design: System Principles Before Code

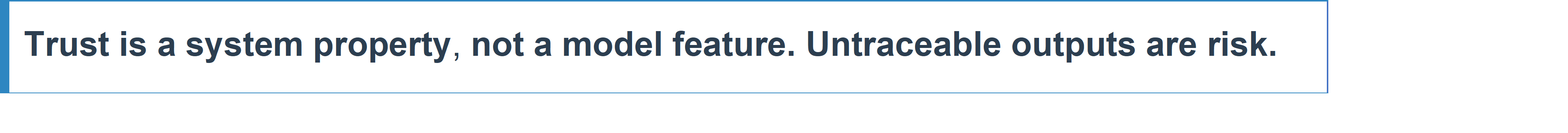

In pitchbooks, the cost isn’t just time, it’s accountability. These decks don’t live in a vacuum; they travel through committees, legal review, and client scrutiny. And that changes what automation means. It’s not enough to produce something that looks right. A pitchbook has to be defensible: every number must be traceable, every claim must be explainable, and every slide must hold up under questions.

We approached the system with a domain-first mindset.

That’s where Google Antigravity changed the nature of the work, not as an IDE but as a collaborator that could translate between domain architecture and the modular code it needed to become.

Before writing a single line of code, four guiding principles defined :

Building the Pipeline: — Iterative Collaboration in Action

Every significant feature began with a domain constraint rather than an engineering task. That distinction shaped how the system evolved.

How Antigravity Enforced a Constraint-Driven Architecture

Once the design principles were clear, the focus moved beyond what needed to be built to how those principles would endure in practice.

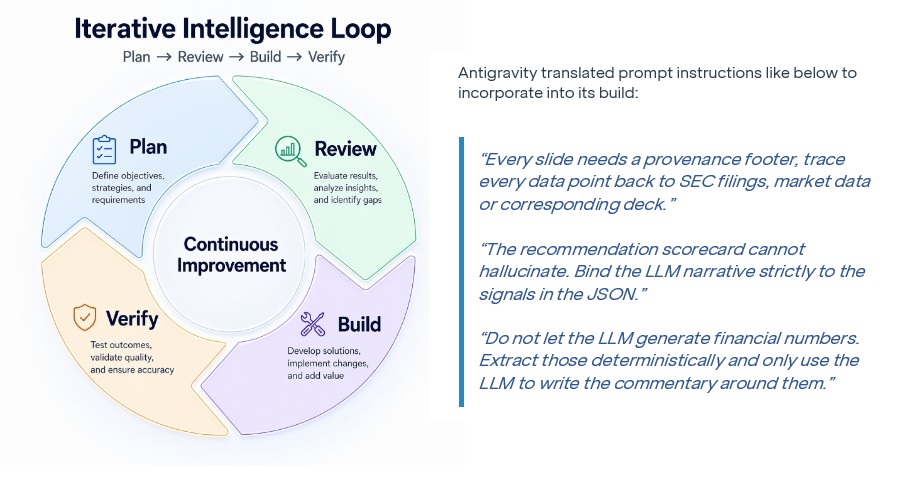

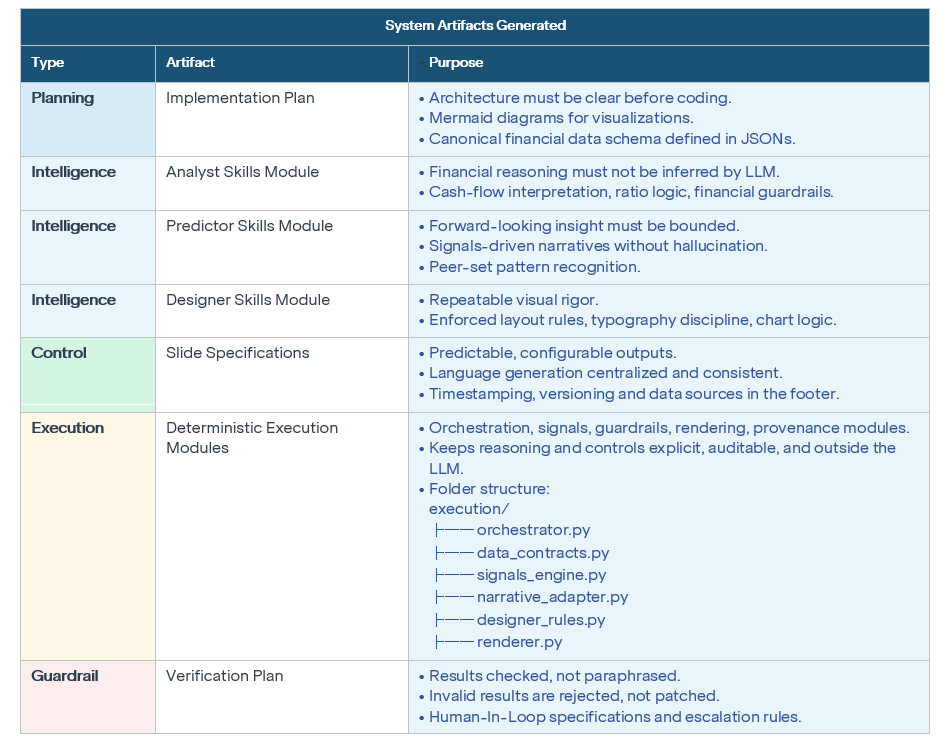

Iterative collaboration with the agentic builder, grounded in domain-constrained prompting and stepwise planning, transformed intent into a system of artifacts, execution constructs, and guardrails.

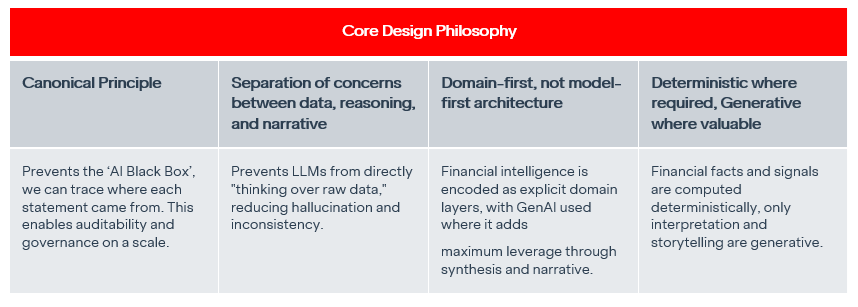

The Architecture That Emerged

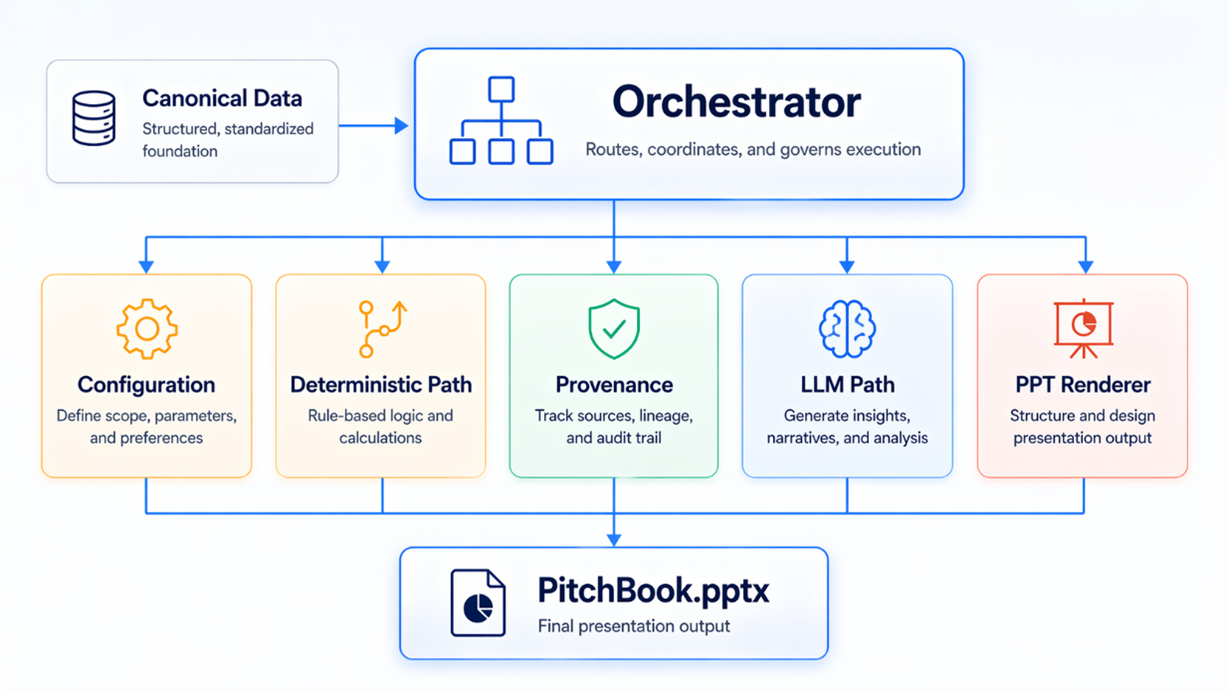

What emerged was a structured data flow pipeline shaped by constraint rather than convenience.

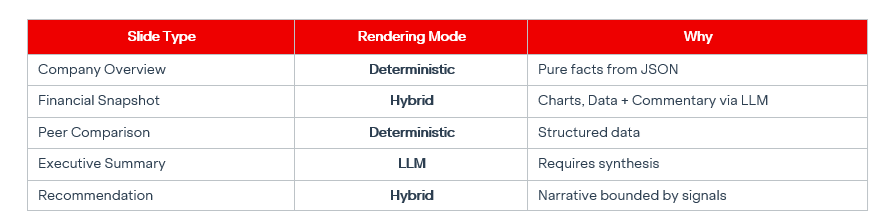

The most important architectural decision was restraint. Enterprise AI maturity is not about where to use AI. It is about knowing where not to.

This was not just a technical decision. It was a reframing of how financial intelligence is constructed. Instead of relying on models to infer correctness, the system encoded correctness upfront. Instead of defaulting to AI, it applied it selectively, where it added real value.

Conclusion: Scaling Financial Intelligence with Agentic Design

This project began with a simple premise: if a pitchbook is the most consequential artifact in investment banking, its creation should be just as deliberately design. By combining a Domain-First Architecture with the agentic capabilities of Google Antigravity, we have moved beyond “vibe coding” into a world where intelligence is reliable by construction.

The Business Impact: Breaking the Productivity Plateau

- The shift from manual slide-building to an orchestrated agent system delivers measurable bottom-line value. Our results align with broader industry benchmarks that show that agentic systems are finally breaking the productivity plateaus of the previous AI generation.

- 80.8% Boost in Financial Reasoning: According to Google’s 2026 research, ‘Towards a Science of Scaling Agent Systems’, centralized agent coordination (similar to our three-layer architecture) improves performance on parallelizable tasks like financial reasoning by over 80%.ii

- 3.9x Reduction in Operational Risk: By enforcing "Canonical Data" and "Separation of Concerns," we effectively contain error amplification. Research shows that centralized coordination restricts error propagation to just 4.4x, compared to 17.2x for uncoordinated independent agents.iii

- Scaled Efficiency: Institutional research by McKinsey indicates that early adopters of agentic workflows see 20–60% productivity gains and a 30% reduction in operational costs.iv

- Mainstream Adoption: This is no longer a niche experiment; Gartner predicts that 40% of all enterprise applications will feature task-specific AI agents by the end of 2026.v

A trustworthy AI need not know everything, we need it to know what it does not know.

References

i. Antigravity, Google Cloud: https://antigravity.google/

ii. Towards a science of scaling agent systems: When and why agent systems work, Yubin Kim and Xin Liu, Arxiv, 2026: https://arxiv.org/html/2512.08296v1

iii. Towards a science of scaling agent systems: When and why agent systems work, Yubin Kim and Xin Liu, Arxiv, 2026: https://arxiv.org/html/2512.08296v1

iv. state of AI trust in 2026: Shifting to the agentic era, Gabriel Morgan Asaftei, Roger Roberts, Abby Sticha, Cécile Prinsen, McKinsey & Co, March 25, 2026: https://www.mckinsey.com/capabilities/tech-and-ai/our-insights/tech-forward/state-of-ai-trust-in-2026-shifting-to-the-agentic-era

v. Gartner Predicts 40% of Enterprise Apps Will Feature Task-Specific AI Agents by 2026, Up from Less Than 5% in 2025, Gartner, September 5, 2025: http://gartner.com/en/newsroom/press-releases/2025-08-26-gartner-predicts-40-percent-of-enterprise-apps-will-feature-task-specific-ai-agents-by-2026-up-from-less-than-5-percent-in-2025

vi. What is agentic coding?, Google Cloud: https://cloud.google.com/discover/what-is-agentic-coding